A new research paper from Minds, an alt-tech, blockchain-based social media, challenges the notion that individuals with extreme views need to be removed from social networks. The Minds research team argues the practice of deplatforming drives more people to violent extremism and kills any chance of deradicalization.

“The Censorship Effect” was published to analyze “the adverse effects of social media censorship and proposes an alternative moderation model based on free speech and Internet freedom.”

The paper becomes part of the platform’s Modification Mind initiative, a push to focus on engagement with controversial views rather than sterilizing them from the web.

Minds CEO and co-founder Costs Ottman has a vision for reimagining social networks’ methods to radical content.

“None of these sites have consistent policies. There [are] no principles,” Ottman, also one of the paper’s authors, told Timcast. “They seem to just be responding to whatever the whims of whatever is currently trending or popular.”

A supporter of free info, the tech executive stated major tech platforms regularly miss opportunities to deradicalize users by removing them from their sites.

“We need to convince the left, particularly, that if your goal is deradicalization and to actually have a positive impact on global discourse, you cannot be pro-censorship,” Ottman said. “I think it is foundationally necessary to have access to as much information and speech as possible in order to have the maximum impact and to be able to make informed decisions.”

The Modification Minds team contends that through research study and information analysis, they can prove free speech environments can result in deradicalization “which is a fair assumption because if you ban those people there is no way you are ever going to deradicalize them because you just banned them,” according to Ottman.

Deplatforming controversial or radical users– either by suspending or prohibiting their accounts– is often considered an essential action in preventing the spread of incorrect or misinformation. However, Ottman states this claim is really in a research”gray area” and could be considered a misconception.

“Yes, censorship can limit the reach of certain individuals or topics in an isolated network,” Ottman said. “Obviously, if Twitter bans Trump, then Trump’s reach on Twitter individually is reduced.”

But a ban– specifically of a major figure– can also increase interest and conversations on the platform regarding the now-banned user, triggering more virality.

For users who do not have a significant following, being banned can drive them to another platform and motivate them to become more radicalized, Ottman states.

Ottman and his team believe the method to compel bigger platforms to write their user policies based on data is by showing the effectiveness of their method through research.

“You would think that they would want data to back up the fact that censorship is actually better for the world,” Ottman said. Without empirical information to direct their actions, Ottman thinks it is reasonable to assume huge tech is governing its deplatforming policies ideologically.

The authors of the”Censorship Result” paper emphasize the value of comparing”cognitive radicalization” or holding severe beliefs and “violent extremism” or positioning a clear risk, showing clear assistance, or engaging actively.

“In science, it is crucial to understand that correlation does not equate to causality,” the study’s authors write. “While many violent extremists or terrorists radicalized, at least in part, online and often engage with radical or violent extremist content over social media, there is a difference between radicalizing while using social media and being radicalized by social media.”

With the goal of deradicalizing as lots of users as possible, the Change Mind initiative focuses on positive intervention and voluntary discussion.

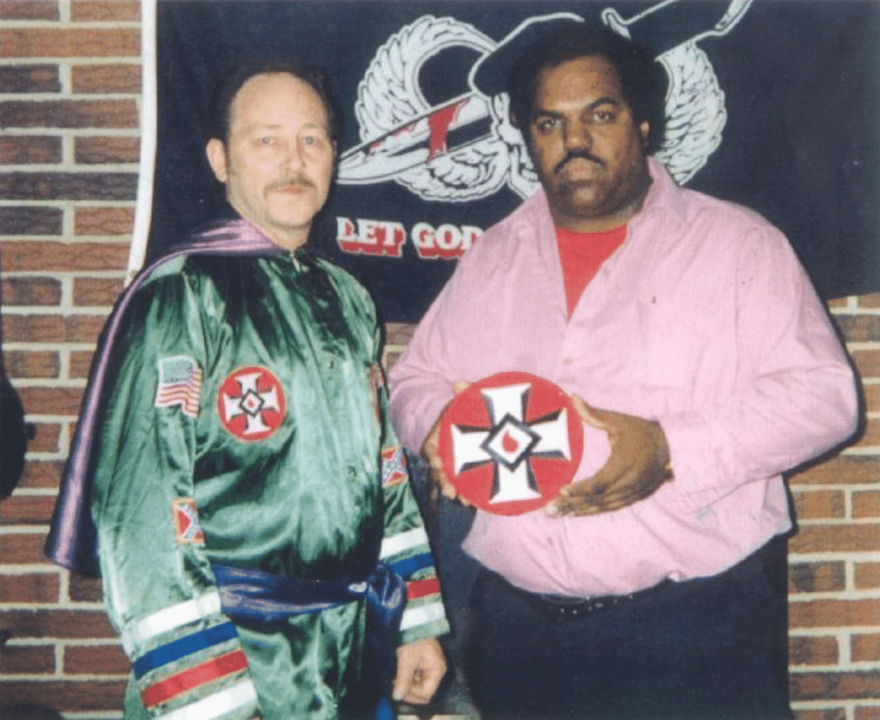

Minds worked with Daryl Davis to develop a practical method to confront extreme views on the platform instead of simply erasing the material by eliminating the users.

Davis, an African-American recording artist, explains himself as a race relations expert. He composed the book Klan-Destine Relationships about his experience establishing relationships with members of the Ku Klux Klan to understand the roots of racial bias. He reports he opened discussions by asking “How can you hate me when you do not even know me?” and has actually encouraged a minimum of 200 individuals to leave the KKK.

“Daryl proved that by befriending neo-Nazis and KKK members… that is how you result in deradicalization,” Ottman said. “You don’t bully somebody out of ideology. You have to listen to them and treat them as a human for there to be any chance to change.”

Davis assisted train moderators at Minds to approach their jobs from a structure of deradicalization and empathy, prioritizing a desire to link over a sense of being activated. Ottman stressed the significance of guaranteeing mediators on tech platforms are certified and prepared to engage with graphic or extreme material.

Ottman states he visualizes those involved with the initiative as individuals prepared to have discussions with users expressing a selection of radical material, from racial supremacy to suicide and self-harm.

“A lot of people just need someone to talk to,” Ottman said.

Internal Facebook documents released in October 2021 suggested the platform committed a significant quantity of financial resources to manage material considered to be harmful.

A 2019 post titled “Cost control: a hate speech exploration” explored how the business could reduce its opening on hate-speech moderation.

“Over the past several years, Facebook has dramatically increased the amount of money we spend on content moderation overall,” the post states. “Within our total budget, hate speech is clearly the most expensive problem: while we don’t review the most content for hate speech, its marketized nature requires us to staff queues with local language speakers all over the world, and all of this adds up to real money.”

Without consisting of a specific amount, the report indicated 74.9%of expenditures were sustained as a reactive cost while proactive efforts were 25.1%of Facebook’s hate-speech spending.

“Imagine if you took even half of those resources and put them toward positive intervention — people who aren’t going around banning users but who are around and reaching out to them and who are actually trying to create dialogue,” Ottman said. “I think you would see a massive impact. People would actually feel respected, they wouldn’t feel victimized.”

In addition to using their mediators to step in instead of purge content, Minds uses “Not Safe For Work” filters to handle how freely accessible explicit or radical content is on the platform.

Ottman said the platform depends on neighborhood reporting. As soon as the content is reported, it is put behind a filter instead of being gotten rid of.

Minds users can also develop their own algorithms and selected what material they are interested in seeing.

Ottman said his team will continue to study the development of their deradicalization efforts for the next ten years. Among the metrics, they will keep track of is the number of users who selected to include opposing perspectives in their newsfeeds.

While Ottman did not provide explicit details on how Minds determines users it considers extreme, he said that the business is careful to safeguard users ‘personal privacy and does not gather information in such a way that could, later on, be sold.

To Ottman, Minds is distinctively placed to initiate an anti-censorship motion amongst tech platforms because it prioritizes neutrality more than other alternatives to bigger social media sites.

“I don’t think that the red version of the blue site is the right move,” Ottman said. “I don’t think that they are approaching this in a long-term sustainable way.”

Gettr, TRUTH, and Parler are only attracting like-minded users and do not have transparency, the executive stated. Ottman argues that since the platforms are not open-source, there is no chance to individually figure out if they truly operate any differently from Facebook and Twitter.

Ottman called Gab “an interesting case” due to the fact that while the platform is open-source it was extremely “conservative focused … almost religious.”

“They are being good in terms of transparency but I think that they are also inaccessible to the left,” Ottman said. “There is no way anyone on the left would join Gab and feel welcome.”

Ultimately, Ottman hopes his group’s research and Minds’s small amounts policies will challenge large tech companies to reassess if prohibiting users they consider to be extreme is the best course of action. If the company’s information proves that censoring extreme users is most likely to result in extremist violence, big tech may need to think about the notion that engaging in the debate has more favorable results than canceling undesirable views.

H/T Timcast